Introduction to Pod

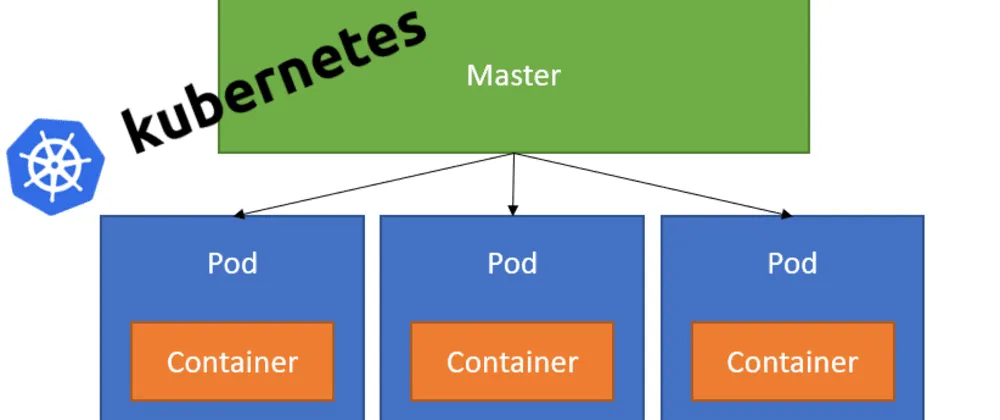

In the intricate world of modern server infrastructure and application deployment, the concept of Pods emerges as a cornerstone of efficiency and scalability. Pods, the fundamental building blocks of computing within Kubernetes, are the smallest deployable units that encapsulate the essence of containerized applications. To put it simply, a Pod is akin to a server container, a compact and self-contained environment housing one or more applications, along with the resources they need to function. This seemingly straightforward definition, however, belies the profound impact Pods have on streamlining operations and enhancing the agility of software development.

The relevance of Pods in today’s tech landscape stems from their ability to provide a lightweight and flexible approach to managing application workloads. They offer a standardized unit of deployment, ensuring that applications are packaged with all necessary configurations and dependencies, thus simplifying the process of moving applications across different computing environments. Moreover, Pods are designed to be ephemeral, allowing for quick scaling and replacement, which is crucial for maintaining high availability and resilience in dynamic cloud environments.

Delving into their functionality, Pods operate as a shared context for containers, providing them with a network and storage resources. This shared environment enables containers within a Pod to communicate seamlessly and share data, fostering an efficient and coordinated operation. The orchestration of these containers is managed by Kubernetes, which ensures that the application runs smoothly and remains highly available.

Benefits of Using Pods

In the fascinating realm of cloud computing, the advent of Pods has revolutionized how we approach scalability, resource management, and application isolation. Pods, as the quintessential units of deployment in Kubernetes, unlock a trove of benefits that are pivotal to the modern data-driven world.

Scalability

Scalability is a cornerstone of cloud infrastructure, and Pods play a pivotal role in this domain. Imagine a scenario where user demand spikes unexpectedly. With Pods, applications can scale out seamlessly by spinning up additional instances to handle the load, thereby ensuring that no user is left waiting. This elasticity not only caters to fluctuating demands but also aligns resource consumption with actual usage, optimizing costs in the process.

Resource management

Delving into “resource management”, Pods offer a harmonious solution by encapsulating applications within their own resource boundaries. This means that each Pod can be allocated a specific set of resources, such as CPU and memory, which it can utilize without interference from other Pods. Such fine-grained control over resource allocation not only prevents resource contention but also paves the way for efficient utilization, leading to a robust and stable server environment.

Isolation

Furthermore, the isolation that Pods provide is a feature that cannot be overstated. Each Pod operates in its own isolated bubble, with its network stack and storage resources. This isolation is not just a matter of compartmentalization; it’s a security boon. It ensures that applications are shielded from each other, reducing the risk of a single point of failure and enhancing the overall resilience of the system. In a world where cybersecurity threats loom large, the isolation feature of Pods serves as a formidable defense mechanism.

In essence, the use of Pods in a GPU Cloud environment amplifies the capabilities of Kubernetes, providing a scalable, well-managed, and secure platform for deploying applications. As we continue to push the boundaries of what’s possible in the cloud, the benefits of using Pods will undoubtedly become even more pronounced, solidifying their place as a cornerstone of modern server infrastructure.

Creating and Managing Pods: A User-Friendly Approach

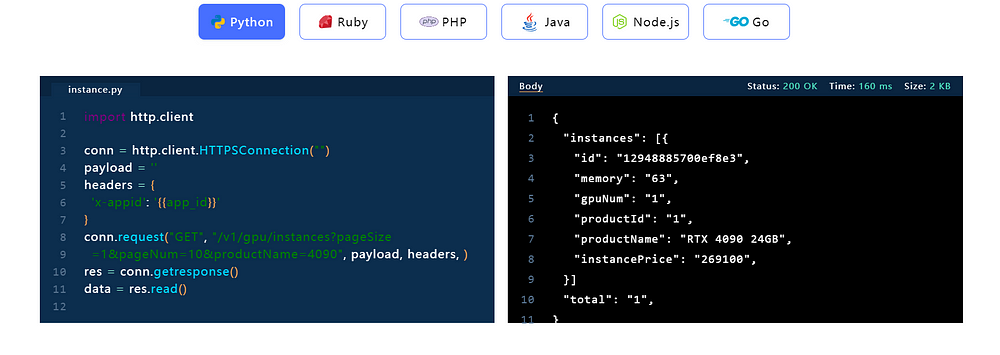

In this section, we’ll explore a step-by-step guide to creating Pods and delve into best practices for managing and optimizing their performance, using Novita AI Pods as a practical example. Although Novita AI Pods does not utilize Kubernetes technology, it still makes a significant contribution to the use of GPUs in everyday and work scenarios.

Define the Pod Specifications

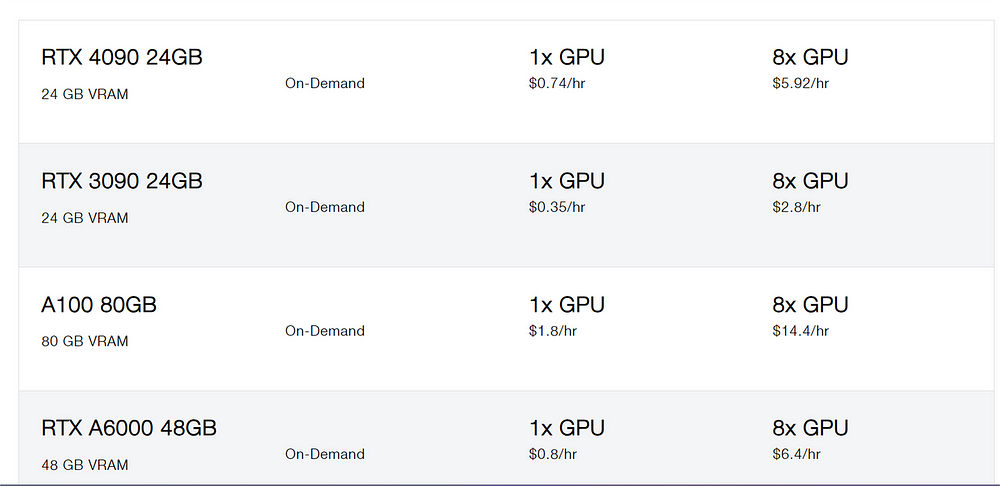

Begin by outlining the requirements for your application, such as the type of GPU, the amount of memory, and CPU cores. Novita AI Pods offers a variety of GPU models, each tailored to specific computational needs, making it easy to select the right resources for your Pod.

Please refer to the official website for the specific prices.

Utilize Novita AI’s User Interface:

Navigate through Novita AI’s intuitive interface to specify the Pod’s configuration. Here, you can choose from pre-configured templates or create a custom setup that suits your application’s unique demands.

Select Frameworks:

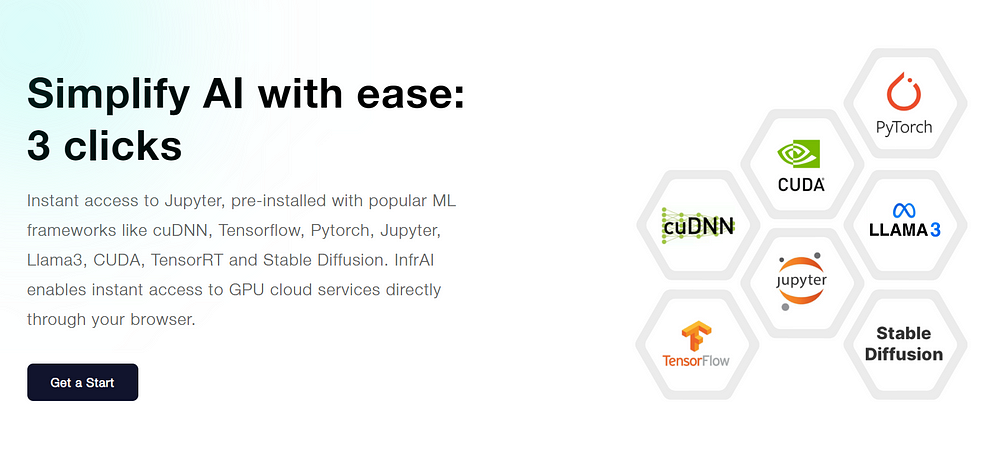

Novita AI supports a wide array of frameworks, including but not limited to TensorFlow, PyTorch, CUDA, and cuDNN. Choose the ones that align with your development stack.

Deploy the Pod:

Once your Pod configuration is ready, deploy it onto the GPU-Cloud. Novita AI’s platform automates the deployment process, getting your application up and running quickly.

Best Practices for Pod Management

Monitor Performance:

Regularly monitor your Pod’s performance using Novita AI’s monitoring tools. Keep an eye on metrics like CPU and memory usage to ensure your application is performing optimally.

Manage Data with Volumes:

Use volumes to manage data within your pods. Novita AI Pods supports various storage solutions, allowing you to choose the one that best fits your data management strategy.

Ensure Security:

Implement security best practices, such as using secure container images, restricting network access, and regularly updating your application and its dependencies.

Optimize for Cost:

Use Novita AI’s cost-effective resources and pay-as-you-go pricing model to optimize your spending. Only pay for the resources you use, and take advantage of any discounts or promotions offered by Novita AI.

By following these steps and best practices, you can create and manage Pods on Novita AI Pods with confidence, deploy, scale, and maintain your applications efficiently. This approach not only streamlines the process of cloud application management but also empowers developers to focus on innovation rather than infrastructure concerns.

Community and Support in Novita AI Pods

Novita AI Pods foster a vibrant user community where enthusiasts and professionals can engage in discussions about Pods and associated technologies. This community serves as a hub for sharing insights, troubleshooting, and collaborative learning, enhancing the user experience through collective knowledge and peer support. In addition to the dynamic user communities, Novita AI Pods offers robust support channels to ensure that users have access to assistance whenever they encounter issues related to their Pods. With a commitment to excellence, Novita AI Pods provides:

User Communities: Direct engagement with a community of users who actively discuss and share experiences with Pods and related technologies, offering a platform for collaborative problem-solving and knowledge exchange.

Support Channels: Access to comprehensive support resources, including 7x24 customer service, ensuring that any issues are promptly addressed. The support system is designed to cater to a diverse range of clients, providing personalized assistance through dedicated service channels.

Novita AI Pods’s dedication to community building and customer support reflects its understanding of the importance of a strong network for its users, ensuring a seamless and supportive journey in the world of GPU computing.

Conclusion

In summary, the concept of Pods in cloud computing represents a fundamental shift towards a more agile, efficient, and scalable infrastructure. By encapsulating applications and their dependencies into a single, manageable unit, Pods offer a streamlined approach to deploying and managing workloads in a distributed environment. This paradigm not only enhances operational efficiency but also empowers developers to innovate rapidly. Novita AI Pods embody these principles by providing a robust platform that leverages the power of Pods to deliver high-performance GPU computing services. With its focus on user communities and comprehensive support, Novita AI Pods stand out as a reliable partner for businesses and individuals seeking to harness the full potential of GPU-driven applications in a supportive and dynamic ecosystem.

Originally published at Novita AI

Novita AI, the one-stop platform for limitless creativity that gives you access to 100+ APIs. From image generation and language processing to audio enhancement and video manipulation, cheap pay-as-you-go, it frees you from GPU maintenance hassles while building your own products. Try it for free.